Seedance 2.0 Review (2026): The Best AI Video Generator

AI video generation has taken the world by storm in 2026, with one standout model capturing the industry's attention: Seedance 2.0. For a comprehensive overview of the product and access to additional resources, check out the official Seedance 2.0 Home. Ready to create your own AI videos? Explore the Try Free Seedance 2.0 Generator to start generating your custom content.

What is Seedance 2.0?

Seedance 2.0 is a powerful multimodal AI video generator developed by ByteDance (the parent company behind TikTok). It uses a combination of text, images, video references, and audio to produce cinematic video clips with exceptional quality. Unlike traditional text-based AI tools, Seedance 2.0 offers much higher control over the creative process, achieving a 90%+ usable output rate in industry benchmarks. For more insight into its capabilities, check out TechCrunch's coverage of AI and consumer tech.

In this review, we put Seedance 2.0 to the test across multiple use cases: cinematic storytelling, complex action sequences, multi-reference generation, and audio synchronization. So, is Seedance 2.0 worth the hype in 2026? Keep reading to find out!

Why It Stands Out

Why Seedance 2.0 Feels More Convincing in Real Use

What makes Seedance 2.0 stand out is not simply that it can generate AI video. It feels more convincing in practice because the results often look more directed, the audio and visuals feel more unified, and the overall creative process gives users a stronger sense of control from the beginning.

It feels more cinematic from the start

One of the most noticeable things about Seedance 2.0 is how quickly its output starts to feel cinematic rather than merely generated. Motion often looks more deliberate, scene composition feels more intentional, and the final result tends to carry more of the visual rhythm people usually expect from short-form storytelling or polished branded content.

Audio and visuals feel like part of the same idea

A lot of AI video tools can produce impressive clips, but the experience often feels fragmented when sound and visuals do not fully support each other. Seedance 2.0 feels stronger here because scenes come across as more cohesive, which makes the output easier to imagine in actual creative use rather than as a rough technical demo.

It gives creators a better sense of control

Another reason Seedance 2.0 leaves a strong impression is that it feels easier to guide with intent. Instead of relying on a simple prompt-and-hope workflow, it supports a more directed creative process, which is especially useful for users who care about pacing, mood, scene continuity, and overall presentation quality.

It gets to usable results faster

In real creative workflows, speed is not just about how fast a clip renders. What matters more is how quickly the result becomes something worth refining, sharing, or building on. Seedance 2.0 feels more practical because it often shortens that gap between first output and genuinely usable material.

That is ultimately where Seedance 2.0 feels different. It does not just offer more AI video capability on paper. It feels closer to a creative tool that helps people move from idea to polished visual output with more confidence, better control, and a stronger sense of creative direction.

2. How We Tested Seedance 2.0 (Methodology)

We used a fixed test environment and clear evaluation criteria so this review is reproducible and honest.

Test environment

- Duration: 12+ hours of hands-on testing.

- Credits used: ~50 generations across 4 scenario categories.

- Platform: Seedance 2.0 web interface; ByteDance ecosystem app; cloud inference.

- Test date: March 2026. Model version: Seedance 2.0.

Evaluation criteria (how we scored)

We rated Seedance 2.0 on: motion physics and body consistency; prompt adherence and scene coherence; camera stability and cinematic feel; facial animation and lip sync; resolution and detail; ease of use and learning curve; and value for money. Scores are out of 10 and are subjective for comparison purposes.

3. Real Generation Showcases

These examples were generated with Seedance 2.0. Click “Go to Seedance 2.0 Generator” to open the creation page with the prompt and reference images pre-filled.Instead of relying on claims alone, this section focuses on actual generated results. It offers a closer look at how Seedance 2.0 translates prompts into video, with attention to motion, composition, consistency, and creative control.

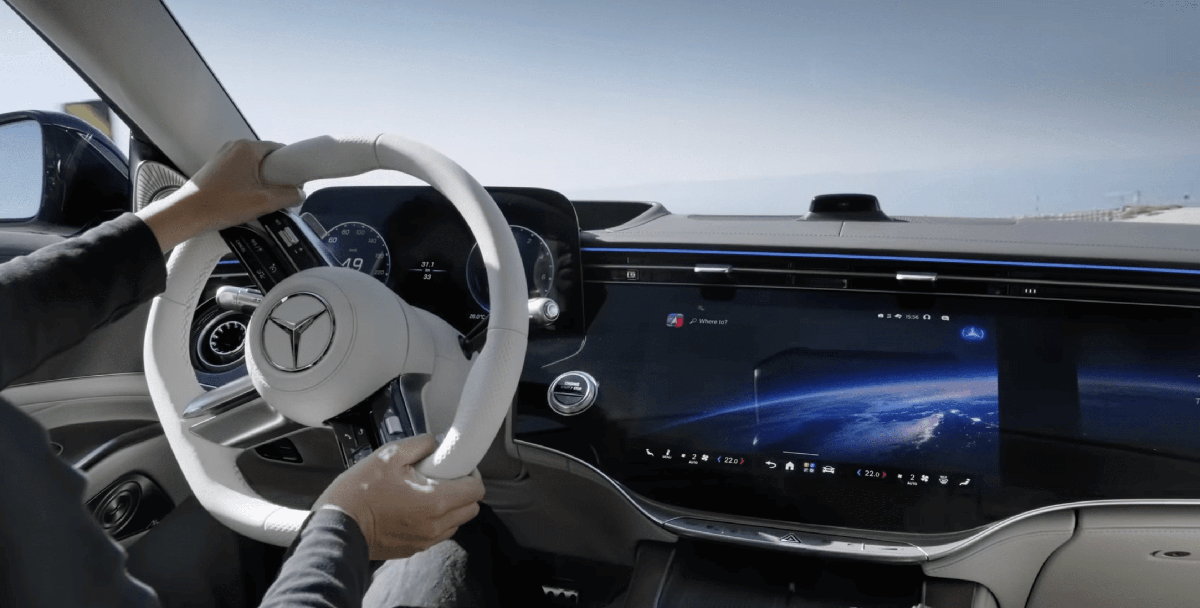

Mercedes S Class 2026 – Vienna to Snow Mountain

01

Prompt

New Mercedes S class 2026 is driving in the street of Vienna city. Sunny day. camera is dynamically following the car and rotating around the car. car is driving over the hi modern bridge in beautiful nature with sunny weather. Camera follows the car shows the rear view of the car. Beautiful Caucasian man, wearing a black suite, white shirt, black tie and black sunglasses is driving the car as professional driver. In the backseat is sitting a beautiful blond woman with beautiful 10 years blond girl. The car is climbing on snow mountain with lot of snow and gets in front of beautiful hotel on the top of the mountain that is covered by snow, sunny day, blue sky. Last shot - camera rotates around the parked car in front of a hotel. @[Image 1] is reference for front view of a car design. Image2 @[Image 2] is reference for car rear view. Image3 @[Image 3] is reference for backset car design. Image4 @[Image 4] is reference for front interior car design.

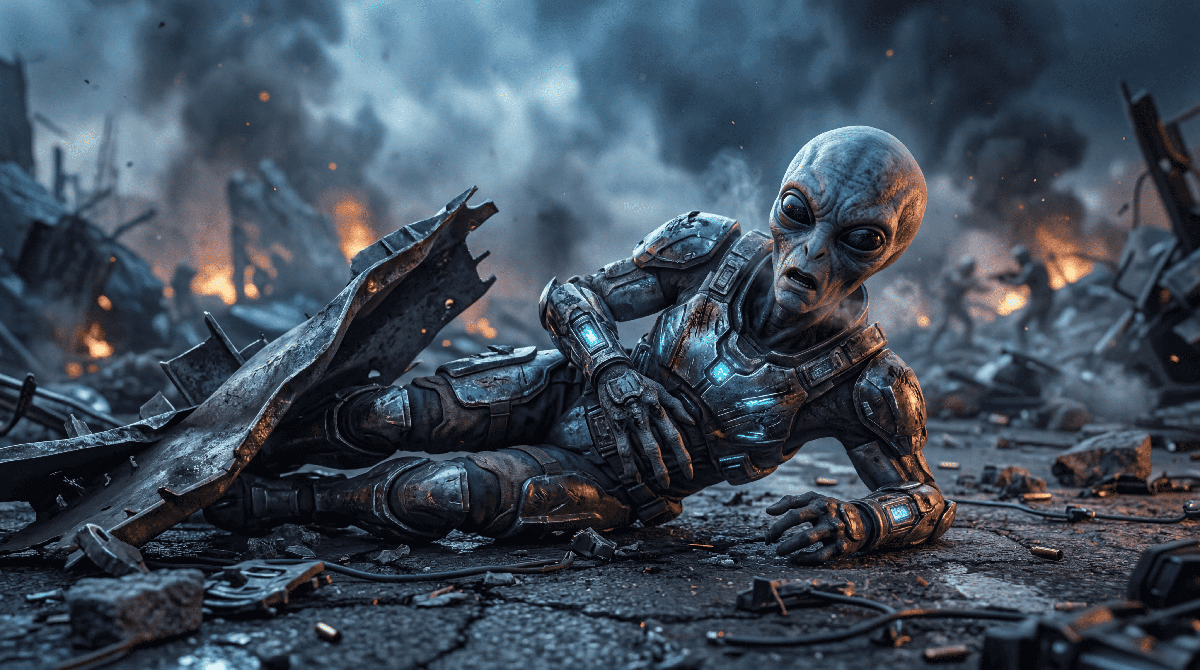

Go to Seedance 2.0 Generator →Invaded City — Wounded Alien Soldier (First/Last Frame)

02

Prompt

Starting exactly from @Image 1 as the opening frame and ending in the composition of @Image 2 as the final frame, maintain full environment continuity between the ruined city skyline and the battlefield debris. The scene begins with a wide aerial view of a devastated city under a stormy sky, where several skyscrapers are partially collapsed, thick black smoke rises into the air, fires burn across the streets, and debris is scattered through broken intersections. The camera slowly glides forward through the destroyed skyline as dust, smoke, and ash drift naturally in the atmosphere, then gradually tilts downward and transitions into a slow cinematic dive toward street level. In the distance, damaged buildings continue to crumble, concrete dust pours from shattered floors, and the camera passes through dense smoke clouds and falling debris, moving seamlessly from the large-scale urban destruction into the ground-level battlefield. As the smoke parts, an alien soldier is revealed trapped beneath twisted wreckage and broken metal debris, its damaged armor flickering with weak blue light. The alien struggles faintly among burning rubble and drifting ash while the camera continues pushing in. Finally, the shot settles into the exact composition of @Image 2: a close-up of the wounded alien lying in the debris, groaning in pain, slightly lifting its head, and muttering with deep regret, “We should have attacked the human species…” Smoke rolls behind it as distant explosions echo across the ruined city. Audio should include low apocalyptic ambience, distant building collapses, crackling fire, wind blowing ash, strained alien breathing and groaning, and the final spoken line delivered weakly with an echoing battlefield atmosphere.

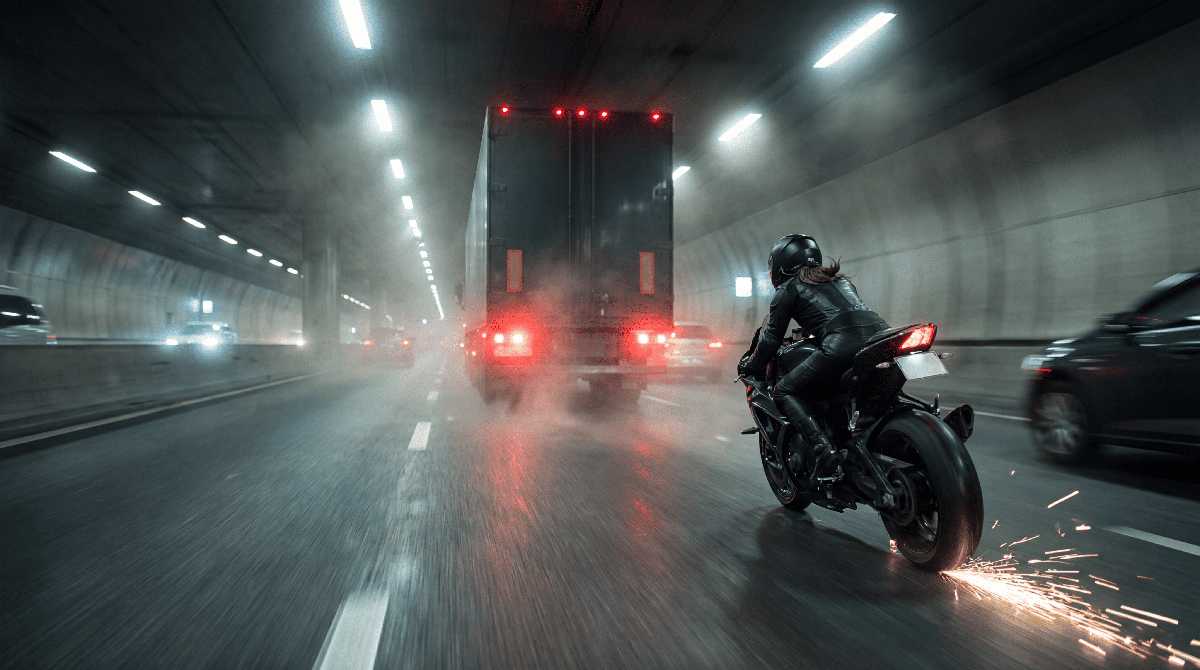

Go to Seedance 2.0 Generator →High-Speed Pursuit — Biker in Tunnel

03

Prompt

Starting from @Image1 as the exact first-frame reference, maintain consistent biker appearance, motorcycle design, cargo truck, and tunnel environment throughout the entire sequence. The scene opens with a wide rear tracking shot inside a misty highway tunnel, where a black-clad biker races forward at extreme speed, the motorcycle leaning dangerously close to the asphalt as bright sparks scrape across the road. Ahead, a large cargo truck barrels through traffic while the camera follows tightly behind the biker, with tunnel lights streaking overhead to enhance the sense of speed and pressure. The perspective then shifts dynamically to the truck driver’s point of view through the side mirror, where the biker rapidly closes the distance, weaving aggressively and precisely between surrounding cars as the camera pushes closer into the mirror reflection, emphasizing the biker’s relentless acceleration. The sequence then cuts to a dramatic aerial view inside the tunnel, showing the truck speeding ahead while the motorcycle darts through traffic behind it, before the biker suddenly accelerates hard and moves directly into position behind the truck’s rear doors. Finally, in a fast side tracking shot, the biker rises slightly on the foot pegs and launches off the speeding motorcycle, leaping toward the back of the truck while the bike continues sliding behind across the tunnel road, trailing a shower of sparks. The overall motion should feel cinematic, intense, and continuous, with strong speed, tunnel light streaks, drifting mist, traffic pressure, and seamless action choreography.

Go to Seedance 2.0 Generator →Cinematic Racing — Sunset Racetrack

04

Prompt

A cinematic racing scene begins on a professional racetrack at sunset. A sleek sports car waits at the starting line while the camera slowly moves around the vehicle, capturing reflections on the polished body and the tension before the race begins. The car suddenly accelerates forward, tires gripping the track as the camera follows closely from multiple cinematic angles. Dust and light smoke appear as the car drifts around sharp corners at high speed. Add dynamic camera movements including whip pans, speed ramps, and motion blur to emphasize the feeling of acceleration. The racing sequence builds intensity as the car speeds through long straight roads and dramatic turns. End the sequence with the car crossing the finish line in a powerful final moment.

Go to Seedance 2.0 Generator →4. How to Use Seedance 2.0 (Quick Walkthrough)

Below is a real, end-to-end generation flow using the Seedance 2.0 web interface. All screenshots are from our hands-on testing session.

Three steps from idea to professional video.

STEP 01

Enter Prompt & References

We input a cinematic prompt and optionally attach image or video references using Seedance's @reference system.

STEP 02

Configure Duration & Audio

Here we set clip length, camera style, and enable native audio generation when needed.

STEP 03

Generate & Export

Generation typically takes 1-3 minutes depending on clip length. Results can be previewed and exported directly.

👉 For a full step-by-step tutorial with prompts and tips, see our complete How to Use Seedance 2.0 Prompt Guide.

5. Deep Dive: Key Features

Seedance 2.0’s core capabilities center on true multimodal input, native audio-visual synchronization, multi-shot narrative generation, high-fidelity visual quality, and efficient, scalable production. Below we break down what each pillar does, why it matters in practice, and who benefits most.

Text-to-Video

Text-to-video lets you drive generation from written prompts. The platform supports multiple aspect ratios and resolutions (480p, 720p, 1080p) and lets you set generation mode and camera behavior. You can also use text and image input together: describe the scene and supply a reference image for style or character consistency. That’s useful for branded content and for locking in a look before animating.

Why it matters: Scripts and copy are the starting point for many campaigns and explainers. Being able to pair them with reference art in one step keeps creative direction clear. Who benefits most: Writers, content leads, and anyone who works from scripts or storyboards. The Seedance 2.0 Prompt Guide helps refine descriptions; duration (5s and 10s on lower tiers, extended or max on higher tiers) lets you plan clip length by plan.

Image-to-Video

Image-to-video animates still images. The product offers image enhancement before animation, so you can improve source quality before generating motion. You can pair images with text prompts for clearer creative direction. Output can be tailored by aspect ratio, resolution, and duration (5s and 10s on lower tiers, extended or maximum on higher tiers).

Why it matters: Concept art, product shots, and storyboard frames often need to become motion. A single pipeline for enhancement and animation reduces handoffs. Who benefits most: Designers, art directors, and anyone producing social clips, product teasers, or previz. Multiple video formats and aspect ratios let you match platform specs (e.g., vertical for Reels, landscape for YouTube) in one tool.

Audio-Visual Sync

The platform emphasizes native audio-visual synchronization: the system is built to align generated video with audio (dialogue, music, or sound design) rather than treating audio as an afterthought. That makes it relevant for ads, explainers, and any content where lip-sync or timed visuals matter.

Why it matters: Voice-driven content is the norm in marketing and education. When the tool is designed for sync from the start, you avoid the “video first, audio slapped on” workflow. Who benefits most: Marketing and advertising teams, e-learning producers, and anyone with a fixed script or voiceover that must drive the cut. We don’t run independent sync tests; the positioning clearly differentiates it from text-to-video-only tools.

Multi-Shot Storytelling

Seedance 2.0 supports multi-shot narrative generation and stable characters across shots. Timeline-level regeneration lets you refine specific segments without redoing the whole video. That aligns with the stated use cases: marketing, content creation, agencies, education, and enterprise.

Why it matters: Single-clip tools hit a ceiling fast. Campaigns and narratives need sequences and consistent characters. Who benefits most: Creative agencies, brand teams, and anyone producing cinematic long-form or ad campaigns with more than one scene. Stable characters and timeline-level control support story-driven work rather than one-off clips.

UI, Workflow, and Ease of Use

The documented workflow is linear: add prompts or assets, customize output (aspect ratio, resolution, camera behavior, generation mode), then generate and export. The interface is presented as a focused path rather than a dense suite of tabs.

Why it matters: Predictable steps reduce friction for teams that need to ship volume. Who benefits most: Anyone onboarding new users or integrating the tool into a pipeline. Customization levers (aspect ratio, 480p–1080p, camera behavior, generation mode) are explicit.

6. Pricing & Value for Money

If you are evaluating Seedance 2.0 pricing in 2026, the core model is straightforward: one-time credit packs, no mandatory subscription lock-in, and credits that do not expire. That structure is especially useful for teams that ship in bursts and want predictable AI video costs without recurring monthly pressure.

| Plan | Pricing & What You Get |

|---|---|

| Base | $9.9 one-time, 99 credits, up to 49 videos. Best for testing and light personal usage. |

| Pro | $29.9 one-time, 370 credits, up to 185 videos. Strong fit for regular creators and short-form production. |

| Ultimate | $49.9 one-time, 700 credits, up to 350 videos. Better for heavier output and longer-duration workflows. |

| Creator | $99.9 one-time, 1665 credits, up to 832 videos, with commercial license and API access & support. |

Value for money / cost per generation

Exact cost per output depends on clip duration and resolution, so credit burn changes between 5s and 10s clips, and between 480p and 1080p exports. In practice, Seedance 2.0 feels cost-efficient when you need controllable, reusable results instead of constantly regenerating from scratch.

One more practical point: hidden cost usually comes from longer scenes, higher resolutions, and re-renders during iteration. Commercial usage rights and API workflows are concentrated in higher tiers, so teams should map plan choice to real delivery needs, not just headline credit count. For full, up-to-date details, use the official Seedance 2.0 Pricing Plans.

7. Alternatives & Competitors

The AI video market is crowded, and the “right” choice rarely comes from hype. In practice, it comes down to workflow fit, creative priorities, and what your production actually needs day to day. This section compares Seedance 2.0 with Sora 2, Wan 2.6, and Kling 3.0 from an editorial, use-case perspective—focused on practical outcomes like creative control, reference-driven direction, and native audio alignment, not lab-style benchmarks.

Quick Comparison: Seedance 2.0 vs Wan 2.6 vs Sora 2 vs Kling 3.0.0

| Dimension | Seedance 2.0 | Wan 2.6 | Sora 2 | Kling 3.0.0 | Notes |

|---|---|---|---|---|---|

| Motion Quality | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★★ (5/5) | Among creators, Kling is widely regarded as one of the strongest models for motion fluidity. |

| Physics Accuracy | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | ★★★★★ (5/5) | ★★★★☆ (4/5) | Sora 2 remains the strongest fit for physics-style realism. Kling 3.0.0 is competitive in fast action shots, while Seedance 2.0 and Wan 2.6 are more dependent on prompt precision for physically complex moments. |

| Audio Sync | ★★★★★ (5/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | Seedance 2.0 is positioned around native audio-visual sync, which gives it a clearer edge for voiceover-heavy or timing-sensitive work. Wan 2.6, Sora 2, and Kling 3.0.0 are usually better treated as video-first pipelines. |

| Face/Character Consistency | ★★★★☆ (4/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | For multi-shot workflows, Seedance 2.0 is more reliable for stable character continuity. Wan 2.6, Sora 2, and Kling 3.0.0 can all work, but consistency usually depends more heavily on prompt and reference discipline. |

| Resolution & Detail | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★★ (5/5) | ★★★★☆ (4/5) | Sora 2 often feels strongest for text-led scene richness. Seedance 2.0 and Kling 3.0.0 both produce detailed outputs, with Seedance generally feeling easier to steer in reference-driven workflows. |

| Creative Control | ★★★★★ (5/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | ★★★☆☆ (3/5) | Seedance 2.0 remains the stronger fit for structured creative control: multimodal direction, reference-guided iteration, and shot continuity. Wan 2.6, Sora 2, and Kling 3.0.0 are usually more prompt-led in practice. |

| Ease of Use | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★☆ (4/5) | Wan 2.6, Sora 2, and Kling 3.0.0 are all approachable for fast generation. If you need a more reference-driven, production-oriented process, Seedance 2.0 usually aligns better with iterative editing workflows. |

| Content Moderation | ★★★☆☆ (3/5) | ★★★★☆ (4/5) | ★★★★☆ (4/5) | ★★★★☆ (4/5) | Seedance 2.0 can be comparatively stricter in moderation for some character-forward concepts. Wan 2.6, Sora 2, and Kling 3.0.0 may allow broader experimentation depending on prompt framing and policy behavior. |

7.1 Seedance 2.0 vs Sora 2: Physics Realism vs Production Control

This matchup is about how you want to “feel” the shot: Sora 2 is usually appealing for text-first cinematic imagination, while Seedance 2.0 is positioned for a more controllable, production-oriented workflow—particularly when audio alignment and multi-shot consistency matter.

Side-by-side demo prompt

“A ceramic coffee cup slips off the edge of a wooden table and crashes onto a stone floor in slow motion. Hot coffee splashes outward, ceramic fragments scatter realistically, and the camera stays low as droplets and shards move across the frame. Soft morning light from a nearby window, detailed debris motion, natural impact sound, cinematic realism.”

Seedance 2.0

You’ll generally get a more production-friendly execution when you treat the output as part of an edit pipeline. With multimodal workflow fit and native audio alignment, the scene rhythm is easier to steer toward a usable result.

Sora 2

The prompt’s physical beat can come across as more visually striking when the generation is primarily text-first. If your priority is physics-style realism, Sora 2 often feels like the more direct route.

Sora 2 is the better fit if your priority is text-first visual imagination and physics-style realism, while Seedance 2.0 is the better fit if your priority is controllable production, multimodal direction, and native audio sync.

Read the Full Seedance 2.0 vs Sora 2 Comparison →7.2 Seedance 2.0 vs Wan 2.6: General Generation vs Structured Workflow

This comparison is about choosing a generation style. Wan 2.6 can be a relevant option if you’re exploring modern AI video generation and experimenting with visually driven prompts, while Seedance 2.0 is designed to fit structured multimodal workflows with multi-shot planning and stable character handling.

Side-by-side demo prompt

“A bicycle courier rides through a narrow neon market street after rain, weaving past pedestrians and glowing storefronts as the camera tracks alongside in cinematic slow motion. Water sprays from the tires, reflections ripple across the pavement, hanging signs sway overhead, and the scene maintains consistent character appearance from start to finish.”

Seedance 2.0

Seedance 2.0 tends to be the stronger fit when you need reference-driven control, multi-shot planning, and stable character handling. With native audio-synced storytelling, it’s better aligned with structured creative workflows.

Wan 2.6

Wan 2.6 is a reasonable choice for broader AI video experimentation and visually prompt-driven exploration. Depending on the prompt complexity, you may still need more iteration when you want tightly structured outputs.

Wan 2.6 is a reasonable choice for broader AI video experimentation, while Seedance 2.0 is the better fit for structured creative workflows, multimodal control, and audio-synced narrative output.

Read the Full Seedance 2.0 vs Wan 2.6 Comparison →7.3 Seedance 2.0 vs Kling 3.0: The Motion & Narrative Showdown

To see how Seedance stacks up against Kling for cinematic motion and long-form feel, we fed both models the same prompt.

Side-by-side demo prompt

“A woman walks through a neon-lit city at night, rain reflecting on the pavement, cinematic slow motion.”

Seedance 2.0

Seedance 2.0 usually feels more production-oriented when continuity and controllability matter. It is easier to shape toward a final cut that matches brand tone, pacing, and scene intent.

Kling 3.0

Kling 3.0 can look bold and dynamic quickly, especially for visually punchy clips. In more structured storytelling scenarios, you may need additional iteration to keep continuity and shot-to-shot intent aligned.

If motion fluidity and extended narrative clips matter most, Kling 3.0 has the edge. If you care more about multi-reference control and built-in audio-visual cohesion, Seedance 2.0 is the stronger choice.

Read the Full Seedance 2.0 vs Kling 3.0 Comparison →Best alternatives if Seedance 2.0 is not available

Depending on region, access, and workflow preference, some users may also consider other AI video tools such as Runway, Luma, or Pika—but the decision still comes back to what kind of production control and pacing you need.

8. Final Conclusion: Should You Use It?

Seedance 2.0 is a strong fit if you need a multi-modal AI video generator with text, image, audio, and video inputs, native audio-visual sync, and multi-shot storytelling. It suits marketing and advertising teams, content creators and influencers, creative agencies and studios, education and e-learning, and business and enterprise. One-time pricing and non-expiring credits reduce subscription pressure; the Creator tier adds commercial license and API access for professional and integration use.

If you’re unsure before committing, compare seedance 2.0 pricing plans and alternatives seedance 2.0. For hands-on prompt guidance, the seedance 2.0 prompt guide is a practical next step.

9. Frequently Asked Questions

01Is Seedance 2.0 good for commercial use?

Yes - based on our testing, Seedance 2.0 is suitable for commercial projects under its paid plans. It can be used for marketing videos, client work, and monetized content, though licensing details may vary by tier.

02Does Seedance 2.0 have a free plan?

No - at the time of testing, Seedance 2.0 does not provide free credits or a free plan. Access requires a paid subscription, which may limit casual experimentation compared to some competitors.

03What kind of videos is Seedance 2.0 best for?

In our tests, Seedance 2.0 performs best for short cinematic clips, social media content, and stylized visuals. It handles motion and scene transitions better than many entry-level AI video tools.

04Does Seedance 2.0 support both text and image inputs?

Yes - it supports both text-to-video and image-to-video workflows. In practice (and in our testing), image-based inputs often produce more stable and controllable results.

05Is Seedance 2.0 worth paying for?

It depends on your needs. If you are looking for consistent motion quality and fast video generation, Seedance 2.0 is a strong option. However, for fully cinematic output, some higher-end models may still perform better.

06How does Seedance 2.0 compare to Sora or Kling?

Compared to Sora and Kling, Seedance 2.0 offers a more accessible and faster workflow. While it may not reach the same level of cinematic realism as top-tier models, it provides a strong balance between quality and usability.

07Is Seedance 2.0 beginner-friendly?

Yes - the interface is relatively simple, and most users can generate videos with basic prompts. That said, better prompts still lead to noticeably higher-quality results.